For years, warehouse digitalisation has focused on systems that register transactions, manage inventory and coordinate material flow. Yet, one crucial part of day-to-day operations has remained largely outside the digital picture: what is actually happening physically on the warehouse floor between system events.

A pallet may be delayed for several minutes before a scanner records the next process step. A route may become congested long before throughput indicators reveal a problem. A safety risk may develop in a specific area without immediately triggering any system alert. In many operations, these moments remain visible to people on site, but invisible to the digital systems responsible for steering the wider process.

As warehouse environments become larger, more automated and increasingly distributed, this blind spot is becoming more relevant. In day-to-day operations, it becomes more difficult to maintain a continuous view across all relevant process areas at the same time, particularly when activities are running in parallel across goods receipt, storage, picking and despatch. At the same time, operational speed and complexity continue to increase. For many logistics organisations, the next step in digital maturity is therefore no longer only about collecting more transactional data, but about capturing operational reality itself in a structured way.

Video data as an operational data source

This is precisely the point at which EPG AURA Observer has been developed to operate. As part of the AI-native EPG AURA environment, AURA Observer turns live video streams into a usable operational data layer for logistics execution.

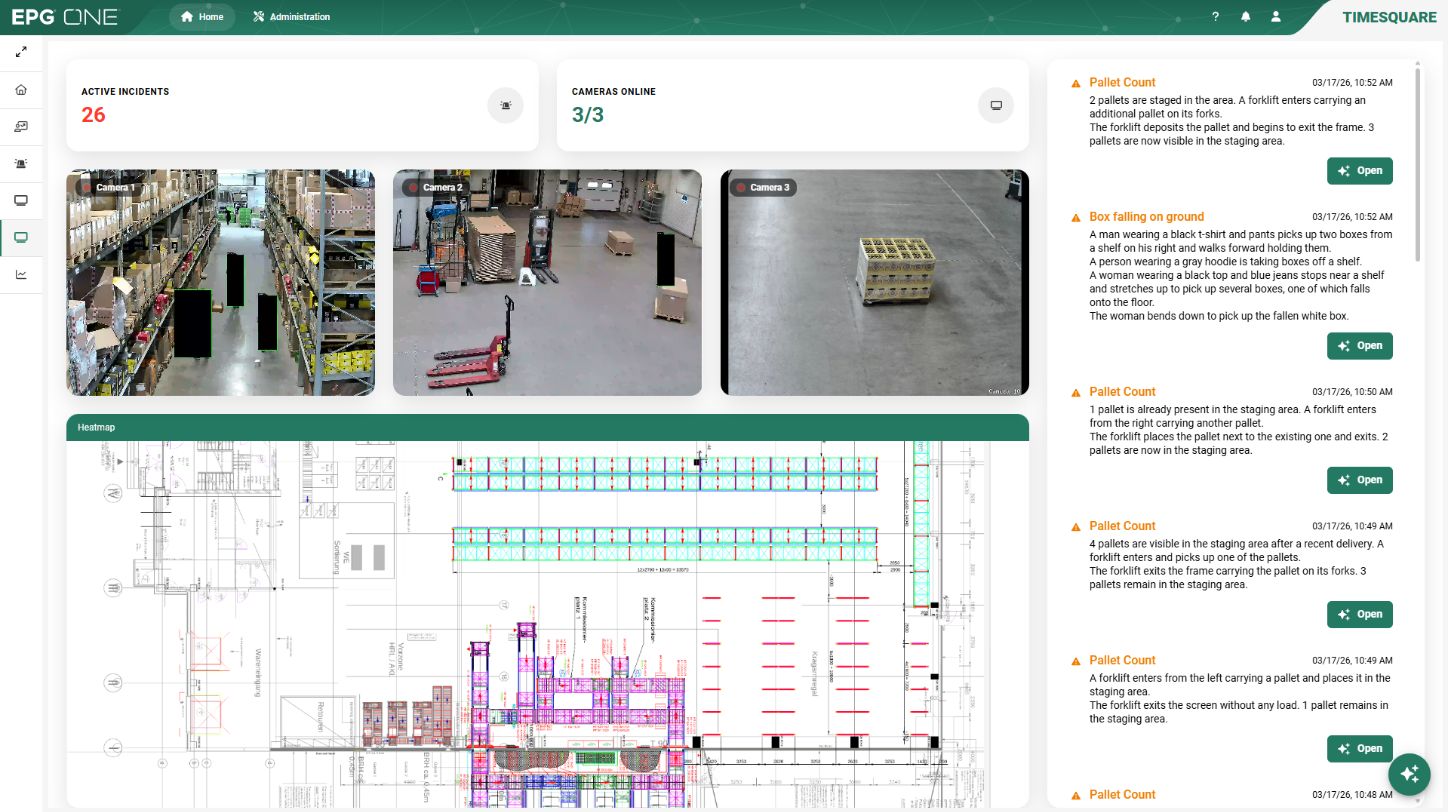

The principle differs fundamentally from conventional video monitoring. Instead of simply displaying camera feeds, the system interprets what is happening in real time and converts relevant observations into structured operational events. Using Intelligent Video Analytics (IVA), movements, interactions, dwell times, disruptions and deviations are continuously detected directly in the warehouse environment. These observations can then be used as status inputs for warehouse management, warehouse control or higher-level decision support systems.

For the first time, video data becomes part of operational logic rather than remaining a passive visual resource.

Interpreting operational context in real time

The real significance of visual analysis begins where observation turns into interpretation. In a warehouse environment, movement alone rarely explains whether a situation matters operationally. What matters is context: whether a delay is part of a normal sequence, a route obstruction is temporary or disruptive, or a deviation signals a broader process issue.

This is where EPG’s AURA Observer adds a different level of intelligence. By combining visual analysis with vision-language models, the system can distinguish between routine activity and situations that carry operational relevance. A blocked emergency route, an unusual accumulation of pallets in one area, interruptions in material flow or movement patterns that differ from established process behaviour can be recognised as distinct events and classified accordingly.

The result is that operational teams are not confronted with raw camera input, but with information that already carries meaning within the logic of warehouse operations. Situations can be prioritised more quickly because the system presents them in a form that aligns with how decisions are made on site: not as isolated images, but as process-relevant signals that can be understood immediately and acted upon within existing routines.

A continuous view across multiple camera perspectives

A particular strength of AURA Observer lies in its ability to analyse movement across several camera positions simultaneously. In many warehouse environments, camera systems provide isolated images of specific areas, but no coherent view of how people, forklifts, or pallets move through larger operational zones. AURA Observer links these perspectives and follows objects and movements across multiple viewpoints, creating a continuous operational picture that makes travel paths, overlaps, dwell zones and frequently congested areas measurable in a way that has previously required extensive manual observation.

Heatmaps generated from this data help make recurring bottlenecks visible and support decisions about layout, routing and process adjustments on a factual basis, particularly where assumptions about traffic patterns and process interruptions have until now depended largely on local experience rather than consistent operational evidence.

Built for operational acceptance

Any technology that interprets visual activity in a warehouse environment inevitably raises questions beyond technical performance alone. Acceptance depends just as much on how clearly its purpose is defined as on what it is technically capable of delivering. For that reason, AURA Observer has been designed so that all visual data is processed in anonymised form, and no personal information is stored. The focus is not on observing individuals, but on recognising operationally relevant situations within ongoing processes and translating them into structured events that can support day-to-day decision-making.

This distinction becomes especially important in environments where transparency must improve without creating unnecessary friction in implementation. The fact that AURA Observer identifies blocked routes, unusual dwell times or safety-related deviations without establishing person-based monitoring is therefore not only a technical design choice, but a practical condition for deployment in real warehouse operations.

The analytical capability behind this approach has been developed in cooperation with NVIDIA. By using NVIDIA’s Metropolis platform, AURA Observer is able to combine object recognition, motion analysis and event classification in real time under industrial conditions. This provides the technical flexibility to run the system either as part of an integrated IT landscape or independently in selected warehouse areas, depending on how companies want to introduce visual intelligence into their existing operations.

Recognition at LogiMAT 2026

The practical relevance of this approach was recently recognised at LogiMAT 2026, where EPG AURA Observer received the Best Product LogiMAT 2026 award in the category Software, Communication & IT. For EPG, this marked the third time the company has received one of the exhibition’s major innovation awards, following recognition in 2014 and 2023.

What made the award particularly notable this year was the timing. AURA was introduced to a broader professional audience for the first time at this year’s exhibition, and the distinction for AURA Observer underlined immediately which aspect of the new environment attracted particular attention: the ability to make operational processes visible where conventional system logic still reaches its limits.

The award also reflects a broader development currently shaping logistics technology. As warehouse environments become more dynamic and operational complexity continues to grow, interest is shifting towards solutions that do not simply process existing system data more efficiently but open up entirely new sources of operational insight. In that sense, the recognition for AURA Observer is not only a product award, but also a sign of where many logistics organisations currently see practical value in the next stage of digital development.

A practical entry point: the Observer Box

Alongside the full AURA Observer capability, EPG also offers the Observer Box as a pre-configured edge solution designed for rapid deployment in operational environments. Hardware, software and pre-installed AI models are combined in one ready-to-use unit that can be introduced directly in selected warehouse zones without requiring a broader infrastructure project or complex integration work from the outset.

This makes it possible to begin with clearly defined operational questions in one specific area, evaluate the practical effect under real conditions and expand gradually where additional visibility proves useful. Especially in larger warehouse environments, where introducing new technology often has to align with ongoing operations, this creates a pragmatic way to make visual intelligence available without turning implementation itself into a major project.

For many companies, that is likely to be the most realistic starting point: not a large-scale rollout, but a focused first application where operational value becomes visible quickly and where new insights can be linked directly to everyday decisions.

Further information on the Observer Box and deployment scenarios can be found here